PM2.5 particulates are becoming a major concern as air-borne contaminants. These micro particles with a diameter of less than 3µm have become subject to new health standards, as when they are inhaled they are too small to be discharged from the lungs and if they remain there for a long time can increase the risk of lung cancer.

Humans breathe a whole lot of air – almost 1500 litres each day. As a result, about 70% of the hazardous substances absorbed by the body comes via the lungs. Usually, even if microparticles stick to the air sacs of the lungs, they are dissolved through the normal functions of the lungs. However, substances such as asbestos or silica are materially stable and do not dissolve in the air sacs of the lungs. It is considered that these substances accumulating in the lungs can lead to a possible risk of cancer. Further, it has also become quite clear that near main trunk roads, vehicle exhaust fumes containing active polluting substances further increase the risk of lung cancer.

While the issue of PM2.5 is very topical, contaminants which are generated far away and drift in the air for a long time do not remain active and are therefore not a serious problem. Rather, the most serious concern is air pollution generated nearby. To be specific, this could come from exhaust fumes from nearby roads, asbestos, silica or lead from soil which is blown up in the wind. There should also be concern in special environments such as pharmaceutical or medical firms that deal with highly potent compounds such as anti-cancer drugs or sites that treat nanoparticles, typified by carbon nanotubes, which are recently drawing attention as new materials.

In particular, within these premises that deal with such hazardous substances, human exposure during synthesizing, production, measurement, separation, collection, etc. is a major issue. The people who manufacture, research and analyze these substances are being placed in the most dangerous circumstances in the course of their duties.

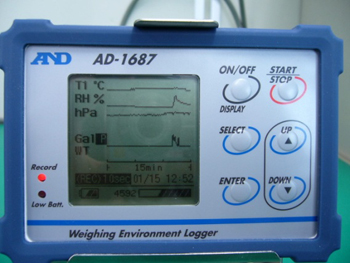

In the measurement of PM2.5 particulates, air is suctioned in from outdoors and passed through a filter. An electronic balance is then used to measure the total amount of microparticles caught in the filter. From news reports such as those we hear from Beijing, with PM2.5 particulates exceeding 300 micrograms per cubic meter of air, we can understand that pollution levels of PM2.5 are determined through weight measurements. Incidentally, the measurement environment for these PM2.5 particulates is subject to extreme restrictions. In order to stabilize measurement with the analytical balance and reduce errors due to moisture absorption, etc., it is stipulated that a measurement environment of 21.5 ±1.5°C and humidity of 35 ±5% must be realized. Also, in order to effectively trap microparticles, a fluorinated filter which can become statically charged very easily and a balance with electrostatic elimination capability are required as well.

At first glance these standards seem quite appropriate, but in order to control the temperature and humidity of a room with balances installed at constant levels, strong breezes are required which will agitate a balance and make stable measurement very difficult. Further, these strong breezes required for controlling temperature and humidity will also cause the hazardous substances trapped in the filters to be stirred up into the air, possibly being inhaled by the researchers conducting the measurement and posing a significant problem. These issues of exposure apply not only to PM2.5 particulates, but all the other hazardous substances mentioned above.

Meanwhile, in recent years devices developed in Europe called balances enclosures, which can seal off hazardous substances, have been drawing a lot of attention as a solution to this problem. Balance enclosures provide the dual functionality of containing these dangerous microparticles and also stabilizing the weight display of the balance. When comparing its capabilities to completely sealed glove boxes or fume hoods which can only perform forced exhaust, the balance enclosure differs in guaranteeing that hazardous microparticles can be easily sealed. Then it occurred to me that creating a measurement environment for PM2.5 particulates by drawing on these features of enclosures might also resolve the problem of exposure to hazardous substances. The remaining problem is therefore how to control temperature and humidity of the air contained by the enclosure while measuring.

Achieving the required measurement environment for PM2.5 particulates for the entire room where an analytical balance is installed requires significant initial facility setup costs, as well as large regular running costs. Also, as mentioned earlier, there is a problem of researchers being exposed to hazardous substances, and it is clear that the balance display inevitably becomes unstable in the end. Further, the high initial cost of the large-scale facilities like those currently being introduced to prefectural institutes for environmental studies is making PM2.5 research very difficult for private enterprises which do not have access to public funds.

Considering these circumstances, I wondered if there wasn’t a possible method for managing the internal humidity and temperature of such a balance enclosure while also keeping the safety functionality of the devices. At that time, the fact that we were manufacturing balance enclosures domestically as an original product suddenly had added merit to us. That is because I conceived the idea that the problems mentioned above could be solved by connecting a balance enclosure to a temperature and humidity controller, as well connecting to a high precision filter unit and then connecting the temperature and humidity controller to the outlet of the filter unit.

Combining these three devices – balance enclosure, temperature and humidity controller and filter unit – is quite simple, but is rendered meaningless when the circulating air inside the unit escapes through the opening section in the front made for operating the measurement apparatus inside. In order to solve this problem, we performed testing for the air’s flow path. As a result, we found that allowing air to flow in from the top of the enclosure, and after stopping this flow with a baffle positioned at the top, allowing the air to be exhausted through the side of the enclosure will successfully let air flow in through the opening at the front. Incidentally, a baffle is a type of control plate configured to control the flow of fluids such as air. The proposed features of this system are not yet included in similar systems either in Japan or overseas, so the assemblage and internal framework of the system was lodged as a patent.

We believe the weighing system proposed here will be widely used for creating a measurement environment for hazardous substances, including PM2.5 particulates, that can be offered at a low price with no further installation work required, which also allows for stable measurement and fully protects the operator from any hazardous substances being measured.

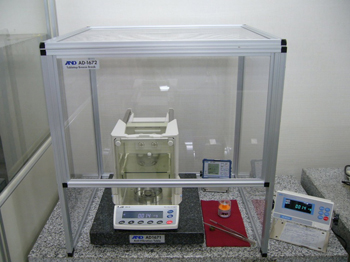

The model below provides a visualization of the basic components of the system.

I have been publishing these Development Stories for over three years now. Upon reflection, it feels that there is a great number of technical terms appearing in each installment, with the details often quite hard to understand. I have therefore added a yet untold story to this episode. About eight years ago, I was talking to a contractor who was building one of the core components of our balances using a special resin. What he told me then was that people who build or develop new things could be considered technological wizards who can magically pull marvelous new creations out of a hat. This component he was building was designed featuring innovative structure and materials that were world firsts in the balance field. At first, however, with all the many resin cast contractors I had met up to that time, after showing them the plans and discussing the design with them, the answer was always “it’s impossible”. I therefore had a big favor to ask of this developer who I was meeting for the first time. While it was extremely tough for him, the design and production of the part was finally completed and it went on to be successfully incorporated into all further A&D balances resulting in a dramatic improvement in the basic functionality of balances.

Returning to my earlier discussion, at first I did not understand the meaning of the comment that developers are “technological wizards”. What he was getting at was that developing something that did not previously exist in this world is an incredibly creative process that requires a bit of special magic. Prior to that meeting, I was already making it my credo as a developer to realize products that surpass competitors’ in functionality and cost effectiveness. But after being called a wizard I came to think from then onwards that, if I consider my role as a developer in an industrial nation, I should refrain from simply producing for the market a reduced cost version of previously available products and try aiming for some real magic. From that time onwards, if I was not working on a new product where there is nothing similar existing in the market yet, or the product is not targeted toward a special market, I resolved to at the very least add some new value or function to a product when I am performing my development work.

Actually, from around that time onwards, considering products which are completely new creations, A&D has released various products such as a pipette leak tester, weighing data logger, environment logger, tuning fork vibro rheometer and balance analyzer. These products have not been as easy to sell as our other, more traditional types of products in Japan. This may be because A&D is perhaps weak in addressing sales routes for products where detailed explanations are required, but we could also say that Japanese are not, particularly in relation to industrial products, open to trying unique new products and instead wait for new developments to be accepted overseas before making their way to Japan. On the basis of that supposition, I have a fear that our new weighing system for hazardous substances will not be easily sold in the Japanese market as a domestic product. However, whether a new product sells well or doesn’t, as a person engaged in new product development and technology in an industrialized nation, in the end I am still fully committed to continuing the development of unique and exciting new products.